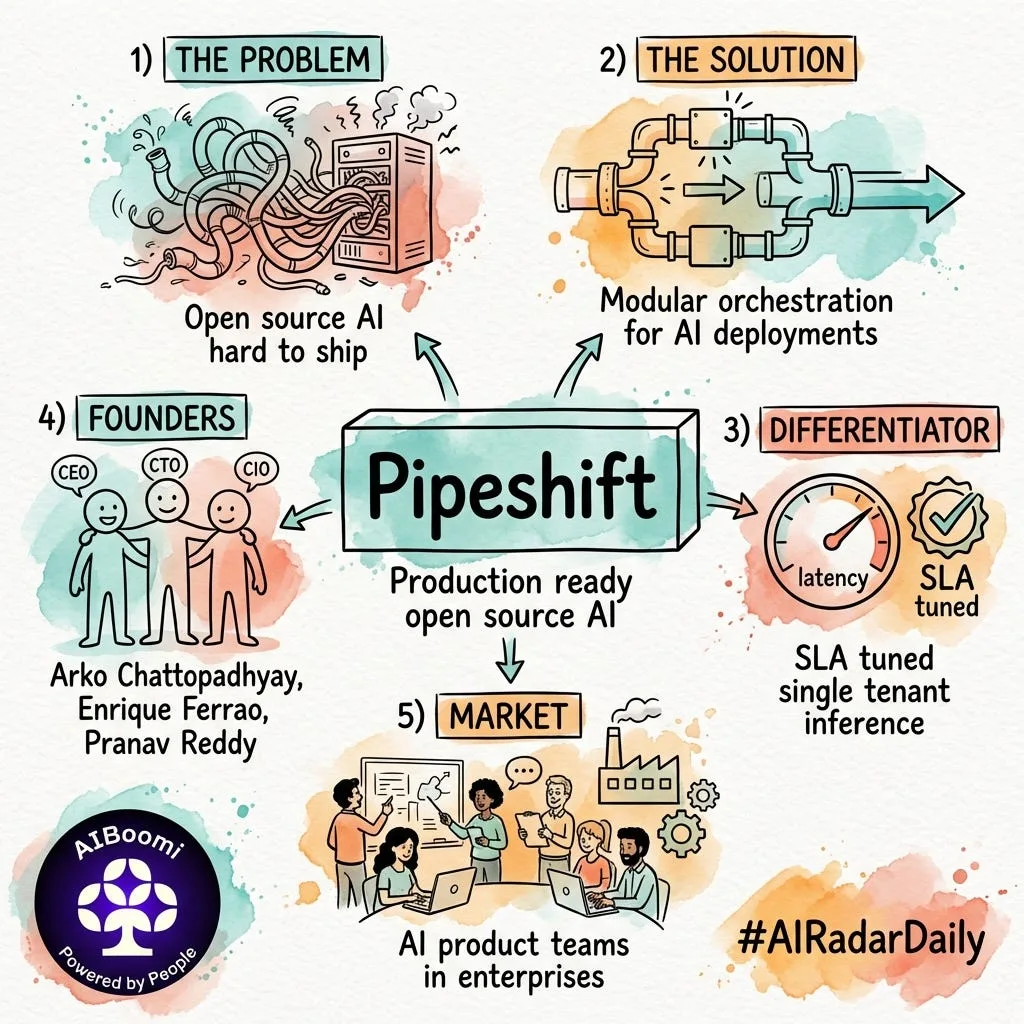

Everyone wants to use open-source models like Llama 3 or Mistral.

Almost no one wants to manage the GPUs required to run them in production.

The gap between “downloading a model” and “serving it at scale” is massive. It requires expensive hardware, deep infrastructure expertise, and endless optimization to avoid cold starts.

Most teams give up and just default to proprietary APIs.

Pipeshift is closing this infrastructure gap.

Founded by Arko Chattopadhyay, Enrique Ferrao, and Pranav Reddy — who met during their undergrad at Manipal Institute of Technology — Pipeshift is an enterprise MLOps platform that makes deploying open-source LLMs as easy as integrating a payment gateway.

This isn’t just managed hosting. It’s an optimization layer.

They abstract away the metal — handling auto-scaling, GPU provisioning, and fine-tuning pipelines automatically across any cloud or on-prem setup.

The market? Every company that wants the performance of modern AI without locking their data into a closed-source ecosystem.

The founders lived this problem. Back in college, while leading a defense robotics non-profit, they struggled with deploying machine learning models for real-time sensor data. Later, they scaled an enterprise search app for 1,000+ employees entirely on-prem. They realized that while open-source models are incredible, the plumbing required to run them is broken.

Now, they are building that exact plumbing for the global developer community.

Let’s celebrate the builders.

w/ Jay Ingle & Dikshant Joshi

#AIInfrastructure #AIBoomiAnnual26